- Table of Contents

- Preface

- 1. Introduction to Networking

- 1.1. History

- 1.2. TCP/IP Networks

- 1.3. UUCP Networks

- 1.4. Linux Networking

- 1.5. Maintaining Your System

- 2. Issues of TCP/IP Networking

- 2.1. Networking Interfaces

- 2.2. IP Addresses

- 2.3. Address Resolution

- 2.4. IP Routing

- 2.5. The Internet Control Message Protocol

- 2.6. Resolving Host Names

- 3. Configuringthe NetworkingHardware

- 4. Configuring the Serial Hardware

- 5. Configuring TCP/IP Networking

- 6. Name Service and Resolver Configuration

- 6.1. The Resolver Library

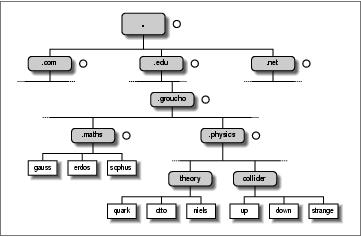

- 6.2. How DNS Works

- 6.3. Running named

- 7. Serial Line IP

- 7.1. General Requirements

- 7.2. SLIP Operation

- 7.3. Dealing with Private IP Networks

- 7.4. Using dip

- 7.5. Running in Server Mode

- 8. The Point-to-Point Protocol

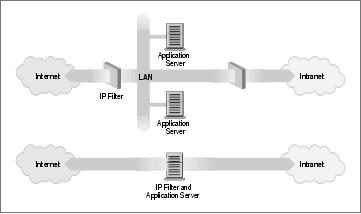

- 9. TCP/IP Firewall

- 9.1. Methods of Attack

- 9.2. What Is a Firewall?

- 9.3. What Is IP Filtering?

- 9.4. Setting Up Linux for Firewalling

- 9.5. Three Ways We Can Do Filtering

- 9.6. Original IP Firewall (2.0 Kernels)

- 9.7. IP Firewall Chains (2.2 Kernels)

- 9.8. Netfilter and IP Tables (2.4 Kernels)

- 9.9. TOS Bit Manipulation

- 9.10. Testing a Firewall Configuration

- 9.11. A Sample Firewall Configuration

- 10. IP Accounting

- 11. IP Masquerade and Network Address Translation

- 12. ImportantNetwork Features

- 13. The Network Information System

- 14. The NetworkFile System

- 14.1. Preparing NFS

- 14.2. Mounting an NFS Volume

- 14.3. The NFS Daemons

- 14.4. The exports File

- 14.5. Kernel-Based NFSv2 Server Support

- 14.6. Kernel-Based NFSv3 Server Support

- 15. IPX and the NCP Filesystem

- 15.1. Xerox, Novell, and History

- 15.2. IPX and Linux

- 15.3. Configuring the Kernel for IPXand NCPFS

- 15.4. Configuring IPX Interfaces

- 15.5. Configuring an IPX Router

- 15.6. Mounting a Remote NetWare Volume

- 15.7. Exploring Some of the Other IPX Tools

- 15.8. Printing to a NetWare Print Queue

- 15.9. NetWare Server Emulation

- 16. ManagingTaylor UUCP

- 17. Electronic Mail

- 17.1. What Is a Mail Message?

- 17.2. How Is Mail Delivered?

- 17.3. Email Addresses

- 17.4. How Does Mail Routing Work?

- 17.5. Configuring elm

- 18. Sendmail

- 18.1. Introduction to sendmail

- 18.2. Installing sendmail

- 18.3. Overview of Configuration Files

- 18.4. The sendmail.cf and sendmail.mc Files

- 18.5. Generating the sendmail.cf File

- 18.6. Interpreting and Writing Rewrite Rules

- 18.7. Configuring sendmail Options

- 18.8. Some Useful sendmail Configurations

- 18.9. Testing Your Configuration

- 18.10. Running sendmail

- 18.11. Tips and Tricks

- 19. Getting EximUp and Running

- 19.1. Running Exim

- 19.2. If Your Mail Doesn't Get Through

- 19.3. Compiling Exim

- 19.4. Mail Delivery Modes

- 19.5. Miscellaneous config Options

- 19.6. Message Routing and Delivery

- 19.7. Protecting Against Mail Spam

- 19.8. UUCP Setup

- 20. Netnews

- 20.1. Usenet History

- 20.2. What Is Usenet, Anyway?

- 20.3. How Does Usenet Handle News?

- 21. C News

- 21.1. Delivering News

- 21.2. Installation

- 21.3. The sys File

- 21.4. The active File

- 21.5. Article Batching

- 21.6. Expiring News

- 21.7. Miscellaneous Files

- 21.8. Control Messages

- 21.9. C News in an NFS Environment

- 21.10. Maintenance Tools and Tasks

- 22. NNTP and thenntpd Daemon

- 22.1. The NNTP Protocol

- 22.2. Installing the NNTP Server

- 22.3. Restricting NNTP Access

- 22.4. NNTP Authorization

- 22.5. nntpd Interaction with C News

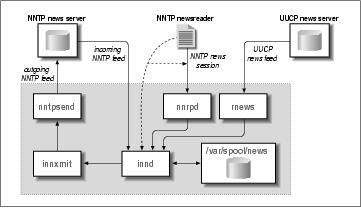

- 23. Internet News

- 23.1. Some INN Internals

- 23.2. Newsreaders and INN

- 23.3. Installing INN

- 23.4. Configuring INN: the Basic Setup

- 23.5. INN Configuration Files

- 23.6. Running INN

- 23.7. Managing INN: The ctlinnd Command

- 24. Newsreader Configuration

- 24.1. tin Configuration

- 24.2. trn Configuration

- 24.3. nn Configuration

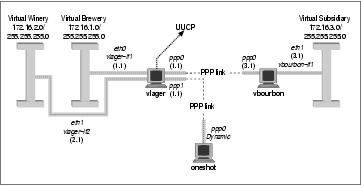

- A. Example Network:The Virtual Brewery

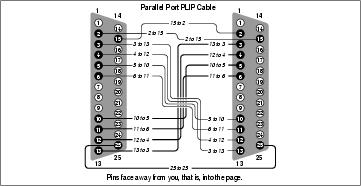

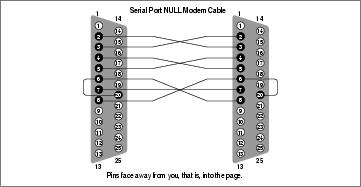

- B. Useful Cable Configurations

- C. Linux Network Administrator's Guide, Second Edition Copyright Information

- C.1. 0. Preamble

- C.2. 1. Applicability and Definitions

- C.3. 2. Verbatim Copying

- C.4. 3. Copying in Quantity

- C.5. 4. Modifications

- C.6. 5. Combining Documents

- C.7. 6. Collections of Documents

- C.8. 7. Aggregation with Independent Works

- C.9. 8. Translation

- C.10. 9. Termination

- C.11. 10. Future Revisions of this License

- D. SAGE: The SystemAdministrators Guild

- Index

- List of Tables

- 2-1. IP Address Ranges Reserved for Private Use

- 4-1. setserial Command-Line Parameters

- 4-2. stty Flags Most Relevant to Configuring Serial Devices

- 7-1. Linux Slip-Line Disciplines

- 7-2. /etc/diphosts Field Description

- 9-1. Common Netmask Bit Values

- 9-2. ICMP Datagram Types

- 9-3. Suggested Uses for TOS Bitmasks

- 13-1. Some Standard NIS Maps and Corresponding Files

- 15-1. XNS, Novell, and TCP/IP Protocol Relationships

- 15-2. ncpmount Command Arguments

- 15-3. Linux Bindery Manipulation Tools

- 15-4. nprint Command-Line Options

- List of Figures

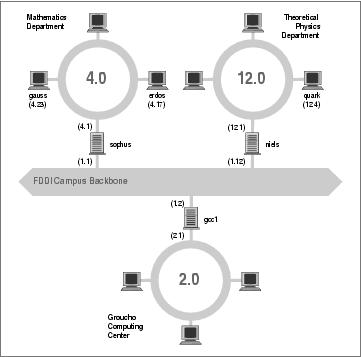

- 1-1. The three steps of sending a datagram from erdos to quark

- 2-1. Subnetting a class B network

- 2-2. A part of the net topology at Groucho Marx University

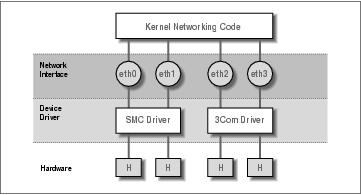

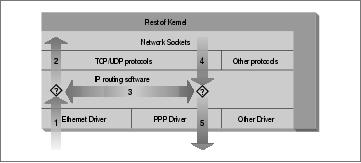

- 3-1. The relationship between drivers, interfaces, and hardware

- 6-1. A part of the domain namespace

- 9-1. The two major classes of firewall design

- 9-2. The stages of IP datagram processing

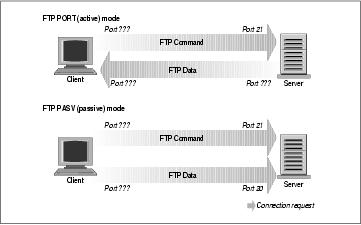

- 9-3. FTP server modes

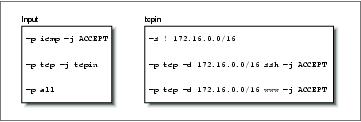

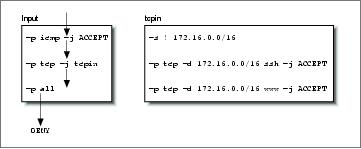

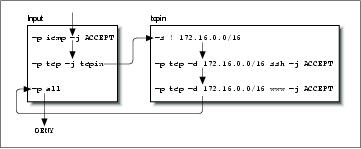

- 9-4. A simple IP chain ruleset

- 9-5. The sequence of rules tested for a received UDP datagram

- 9-6. The rules flow for a received TCP datagram for ssh

- 9-7. The rules flow for a received TCP datagram for telnet

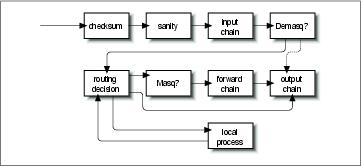

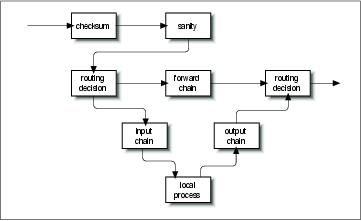

- 9-8. Datagram processing chain in IP chains

- 9-9. Datagram processing chain in netfilter

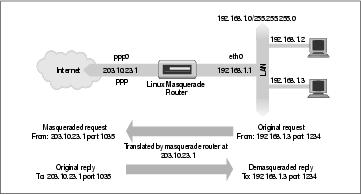

- 11-1. A typical IP masquerade configuration

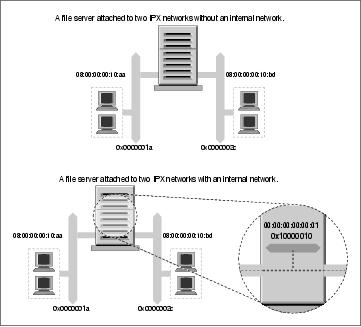

- 15-1. IPX internal network

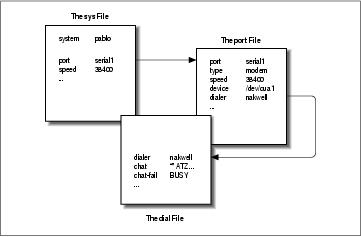

- 16-1. Interaction of Taylor UUCP configuration files

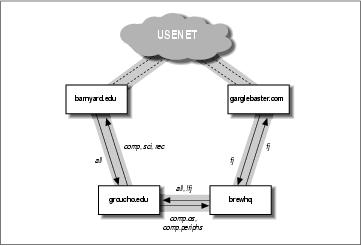

- 20-1. Usenet newsflow through Groucho Marx University

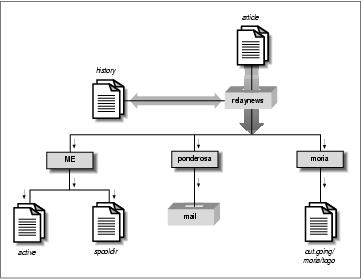

- 21-1. News flow through relaynews

- 23-1. INN architecture (simplified for clarity)

- A-1. The Virtual Brewery and Virtual Winery subnets

- A-2. The Virtual Brewery Network

- B-1. Parallel PLIP cable

- B-2. Serial NULL-Modem cable

- List of Examples

- 4-1. Example rc.serial setserial Commands

- 4-2. Output of setserial -bg /dev/ttyS Command

- 4-3. Example rc.serial stty Commands

- 4-4. Example rc.serial stty Commands Using Modern Syntax

- 4-5. Output of stty -a Command

- 4-6. Sample /etc/mgetty/mgetty.config File

- 6-1. Sample host.conf File

- 6-2. Sample nsswitch.conf File

- 6-3. Sample nsswitch.conf File Using an Action Statement

- 6-4. An Excerpt from the named.hosts File for the Physics Department

- 6-5. An Excerpt from the named.hosts File for GMU

- 6-6. An Excerpt from the named.rev File for Subnet 12

- 6-7. An Excerpt from the named.rev File for Network 149.76

- 6-8. The named.boot File for vlager

- 6-9. The BIND 8 equivalent named.conf File for vlager

- 6-10. The named.ca File

- 6-11. The named.hosts File

- 6-12. The named.local File

- 6-13. The named.rev File

- 7-1. A Sample dip Script

- 12-1. A Sample /etc/inetd.conf File

- 12-2. A Sample /etc/services File

- 12-3. A Sample /etc/protocols File

- 12-4. A Sample /etc/rpc File

- 12-5. Example ssh Client Configuration File

- 13-1. Sample ypserv.securenets File

- 13-2. Sample nsswitch.conf File

- 18-1. Sample Configuration File vstout.smtp.m4

- 18-2. Sample Configuration File vstout.uucpsmtp.m4

- 18-3. Rewrite Rule from vstout.uucpsmtp.m4

- 18-4. Sample aliases File

- 18-5. Sample Output of the mailstats Command

- 18-6. Sample Output of the oststat Command

Preface

The Internet is now a household term in many countries. With otherwise serious people beginning to joyride along the Information Superhighway, computer networking seems to be moving toward the status of TV sets and microwave ovens. The Internet has unusually high media coverage, and social science majors are descending on Usenet newsgroups, online virtual reality environments, and the Web to conduct research on the new “Internet Culture.”

Of course, networking has been around for a long time. Connecting computers to form local area networks has been common practice, even at small installations, and so have long-haul links using transmission lines provided by telecommunications companies. A rapidly growing conglomerate of world-wide networks has, however, made joining the global village a perfectly reasonable option for even small non-profit organizations of private computer users. Setting up an Internet host with mail and news capabilities offering dialup and ISDN access has become affordable, and the advent of DSL (Digital Subscriber Line) and Cable Modem technologies will doubtlessly continue this trend.

Talking about computer networks often means talking about Unix. Of course, Unix is not the only operating system with network capabilities, nor will it remain a frontrunner forever, but it has been in the networking business for a long time, and will surely continue to be for some time to come.

What makes Unix particularly interesting to private users is that there has been much activity to bring free Unix-like operating systems to the PC, such as 386BSD, FreeBSD, and Linux.

Linux is a freely distributable Unix clone for personal computers. It currently runs on a variety of machines that includes the Intel family of processors, but also Motorola 680x0 machines, such as the Commodore Amiga and Apple Macintosh; Sun SPARC and Ultra-SPARC machines; Compaq Alphas; MIPS; PowerPCs, such as the new generation of Apple Macintosh; and StrongARM, like the rebel.com Netwinder and 3Com Palm machines. Linux has been ported to some relatively obscure platforms, like the Fujitsu AP-1000 and the IBM System 3/90. Ports to other interesting architectures are currently in progress in developers' labs, and the quest to move Linux into the embedded controller space promises success.

Linux was developed by a large team of volunteers across the Internet. The project was started in 1990 by Linus Torvalds, a Finnish college student, as an operating systems course project. Since that time, Linux has snowballed into a full-featured Unix clone capable of running applications as diverse as simulation and modeling programs, word processors, speech recognition systems, World Wide Web browsers, and a horde of other software, including a variety of excellent games. A great deal of hardware is supported, and Linux contains a complete implementation of TCP/IP networking, including SLIP, PPP, firewalls, a full IPX implementation, and many features and some protocols not found in any other operating system. Linux is powerful, fast, and free, and its popularity in the world beyond the Internet is growing rapidly.

The Linux operating system itself is covered by the GNU General Public License, the same copyright license used by software developed by the Free Software Foundation. This license allows anyone to redistribute or modify the software (free of charge or for a profit) as long as all modifications and distributions are freely distributable as well. The term “free software” refers to freedom of application, not freedom of cost.

1. Purpose and Audience for This Book

This book was written to provide a single reference for network administration in a Linux environment. Beginners and experienced users alike should find the information they need to cover nearly all important administration activities required to manage a Linux network configuration. The possible range of topics to cover is nearly limitless, so of course it has been impossible to include everything there is to say on all subjects. We've tried to cover the most important and common ones. We've found that beginners to Linux networking, even those with no prior exposure to Unix-like operating systems, have found this book good enough to help them successfully get their Linux network configurations up and running and get them ready to learn more.

There are many books and other sources of information from which you can learn any of the topics covered in this book (with the possible exception of some of the truly Linux-specific features, such as the new Linux firewall interface, which is not well documented elsewhere) in greater depth. We've provided a bibliography for you to use when you are ready to explore more.

2. Sources of Information

If you are new to the world of Linux, there are a number of resources to explore and become familiar with. Having access to the Internet is helpful, but not essential.

- Linux Documentation Project guides

The Linux Documentation Project is a group of volunteers who have worked to produce books (guides), HOWTO documents, and manual pages on topics ranging from installation to kernel programming. The LDP works include:

- Linux Installation and Getting Started

By Matt Welsh, et al. This book describes how to obtain, install, and use Linux. It includes an introductory Unix tutorial and information on systems administration, the X Window System, and networking.

- Linux System Administrators Guide

By Lars Wirzenius and Joanna Oja. This book is a guide to general Linux system administration and covers topics such as creating and configuring users, performing system backups, configuration of major software packages, and installing and upgrading software.

- Linux System Adminstration Made Easy

By Steve Frampton. This book describes day-to-day administration and maintenance issues of relevance to Linux users.

- Linux Programmers Guide

By B. Scott Burkett, Sven Goldt, John D. Harper, Sven van der Meer, and Matt Welsh. This book covers topics of interest to people who wish to develop application software for Linux.

- The Linux Kernel

By David A. Rusling. This book provides an introduction to the Linux Kernel, how it is constructed, and how it works. Take a tour of your kernel.

- The Linux Kernel Module Programming Guide

By Ori Pomerantz. This guide explains how to write Linux kernel modules.

More manuals are in development. For more information about the LDP you should consult their World Wide Web server at http://www.linuxdoc.org/ or one of its many mirrors.

- HOWTO documents

The Linux HOWTOs are a comprehensive series of papers detailing various aspects of the system—such as installation and configuration of the X Window System software, or how to write in assembly language programming under Linux. These are generally located in the HOWTO subdirectory of the FTP sites listed later, or they are available on the World Wide Web at one of the many Linux Documentation Project mirror sites. See the Bibliography at the end of this book, or the file HOWTO-INDEX for a list of what's available.

You might want to obtain the Installation HOWTO, which describes how to install Linux on your system; the Hardware Compatibility HOWTO, which contains a list of hardware known to work with Linux; and the Distribution HOWTO, which lists software vendors selling Linux on diskette and CD-ROM.

The bibliography of this book includes references to the HOWTO documents that are related to Linux networking.

- Linux Frequently Asked Questions

The Linux Frequently Asked Questions with Answers (FAQ) contains a wide assortment of questions and answers about the system. It is a must-read for all newcomers.

2.1. Documentation Available via FTP

If you have access to anonymous FTP, you can obtain all Linux documentation listed above from various sites, including metalab.unc.edu:/pub/Linux/docs and tsx-11.mit.edu:/pub/linux/docs.

These sites are mirrored by a number of sites around the world.

2.2. Documentation Available via WWW

There are many Linux-based WWW sites available. The home site for the Linux Documentation Project can be accessed at http://www.linuxdoc.org/.

The Open Source Writers Guild (OSWG) is a project that has a scope that extends beyond Linux. The OSWG, like this book, is committed to advocating and facilitating the production of OpenSource documentation. The OSWG home site is at http://www.oswg.org:8080/oswg.

Both of these sites contain hypertext (and other) versions of many Linux related documents.

2.3. Documentation Available Commercially

A number of publishing companies and software vendors publish the works of the Linux Documentation Project. Two such vendors are:

Specialized Systems Consultants, Inc. (SSC)

http://www.ssc.com/

P.O. Box 55549 Seattle, WA 98155-0549

1-206-782-7733

1-206-782-7191 (FAX)

sales@ssc.com

and:

Linux Systems Labs

http://www.lsl.com/

18300 Tara Drive

Clinton Township, MI 48036

1-810-987-8807

1-810-987-3562 (FAX)

sales@lsl.com

Both companies sell compendiums of Linux HOWTO documents and other Linux documentation in printed and bound form.

O'Reilly & Associates publishes a series of Linux books. This one is a work of the Linux Documentation Project, but most have been independently authored. Their range includes:

- Running Linux

An installation and user guide to the system describing how to get the most out of personal computing with Linux.

- Learning Debian GNU/Linux, Learning Red Hat Linux

More basic than Running Linux, these books contain popular distributions on CD-ROM and offer robust directions for setting them up and using them.

- Linux in a Nutshell

Another in the successful "in a Nutshell" series, this book focuses on providing a broad reference text for Linux.

2.4. Linux Journal and Linux Magazine

Linux Journal and Linux Magazine are monthly magazines for the Linux community, written and published by a number of Linux activists. They contain articles ranging from novice questions and answers to kernel programming internals. Even if you have Usenet access, these magazines are a good way to stay in touch with the Linux community.

Linux Journal is the oldest magazine and is published by S.S.C. Incorporated, for which details were listed previously. You can also find the magazine on the World Wide Web at http://www.linuxjournal.com/.

Linux Magazine is a newer, independent publication. The home web site for the magazine is http://www.linuxmagazine.com/.

2.5. Linux Usenet Newsgroups

If you have access to Usenet news, the following Linux-related newsgroups are available:

- comp.os.linux.announce

A moderated newsgroup containing announcements of new software, distributions, bug reports, and goings-on in the Linux community. All Linux users should read this group. Submissions may be mailed to linux-announce@news.ornl.gov.

- comp.os.linux.help

General questions and answers about installing or using Linux.

- comp.os.linux.admin

Discussions relating to systems administration under Linux.

- comp.os.linux.networking

Discussions relating to networking with Linux.

- comp.os.linux.development

Discussions about developing the Linux kernel and system itself.

- comp.os.linux.misc

A catch-all newsgroup for miscellaneous discussions that don't fall under the previous categories.

There are also several newsgroups devoted to Linux in languages other than English, such as fr.comp.os.linux in French and de.comp.os.linux in German.

2.6. Linux Mailing Lists

There is a large number of specialist Linux mailing lists on which you will find many people willing to help with questions you might have.

The best-known of these are the lists hosted by Rutgers University. You may subscribe to these lists by sending an email message formatted as follows:

To: majordomo@vger.rutgers.edu Subject: anything at all Body: subscribe listname |

Some of the available lists related to Linux networking are:

- linux-net

Discussion relating to Linux networking

- linux-ppp

Discussion relating to the Linux PPP implementation

- linux-kernel

Discussion relating to Linux kernel development

2.7. Online Linux Support

There are many ways of obtaining help online, where volunteers from around the world offer expertise and services to assist users with questions and problems.

The OpenProjects IRC Network is an IRC network devoted entirely to Open Projects—Open Source and Open Hardware alike. Some of its channels are designed to provide online Linux support services. IRC stands for Internet Relay Chat, and is a network service that allows you to talk interactively on the Internet to other users. IRC networks support multiple channels on which groups of people talk. Whatever you type in a channel is seen by all other users of that channel.

There are a number of active channels on the OpenProjects IRC network where you will find users 24 hours a day, 7 days a week who are willing and able to help you solve any Linux problems you may have, or just chat. You can use this service by installing an IRC client like irc-II, connecting to servername irc.openprojects.org:6667, and joining the #linpeople channel.

2.8. Linux User Groups

Many Linux User Groups around the world offer direct support to users. Many Linux User Groups engage in activities such as installation days, talks and seminars, demonstration nights, and other completely social events. Linux User Groups are a great way of meeting other Linux users in your area. There are a number of published lists of Linux User Groups. Some of the better-known ones are:

- Groups of Linux Users Everywhere

http://www.ssc.com/glue/groups/

- LUG list project

http://www.nllgg.nl/lugww/

- LUG registry

http://www.linux.org/users/

2.9. Obtaining Linux

There is no single distribution of the Linux software; instead, there are many distributions, such as Debian, RedHat, Caldera, Corel, SuSE, and Slackware. Each distribution contains everything you need to run a complete Linux system: the kernel, basic utilities, libraries, support files, and applications software.

Linux distributions may be obtained via a number of online sources, such as the Internet. Each of the major distributions has its own FTP and web site. Some of these sites are:

- Caldera

http://www.caldera.com/ftp://ftp.caldera.com/

- Corel

http://www.corel.com/ftp://ftp.corel.com/

- Debian

http://www.debian.org/ftp://ftp.debian.org/

- RedHat

http://www.redhat.com/ftp://ftp.redhat.com/

- Slackware

http://www.slackware.com/ftp://ftp.slackware.com/

- SuSE

http://www.suse.com/ftp://ftp.suse.com/

metalab.unc.edu:/pub/Linux/distributions/

ftp.funet.fi:/pub/Linux/mirrors/

tsx-11.mit.edu:/pub/linux/distributions/

mirror.aarnet.edu.au:/pub/linux/distributions/

Many of the modern distributions can be installed directly from the Internet. There is a lot of software to download for a typical installation, though, so you'd probably want to do this only if you have a high-speed, permanent network connection, or if you just need to update an existing installation.[1]

Linux may be purchased on CD-ROM from an increasing number of software vendors. If your local computer store doesn't have it, perhaps you should ask them to stock it! Most of the popular distributions can be obtained on CD-ROM. Some vendors produce products containing multiple CD-ROMs, each of which provides a different Linux distribution. This is an ideal way to try a number of different distributions before you settle on your favorite one.

3. File System Standards

In the past, one of the problems that afflicted Linux distributions, as well as the packages of software running on Linux, was the lack of a single accepted filesystem layout. This resulted in incompatibilities between different packages, and confronted users and administrators with the task of locating various files and programs.

To improve this situation, in August 1993, several people formed the Linux File System Standard Group (FSSTND). After six months of discussion, the group created a draft that presents a coherent file sytem structure and defines the location of the most essential programs and configuration files.

This standard was supposed to have been implemented by most major Linux distributions and packages. It is a little unfortunate that, while most distributions have made some attempt to work toward the FSSTND, there is a very small number of distributions that has actually adopted it fully. Throughout this book, we will assume that any files discussed reside in the location specified by the standard; alternative locations will be mentioned only when there is a long tradition that conflicts with this specification.

The Linux FSSTND continued to develop, but was replaced by the Linux File Hierarchy Standard (FHS) in 1997. The FHS addresses the multi-architecture issues that the FSSTND did not. The FHS can be obtained from the Linux documentation directory of all major Linux FTP sites and their mirrors, or at its home site at http://www.pathname.com/fhs/. Daniel Quinlan, the coordinator of the FHS group, can be reached at quinlan@transmeta.com.

4. Standard Linux Base

The vast number of different Linux distributions, while providing lots of healthy choice for Linux users, has created a problem for software developers—particularly developers of non-free software.

Each distribution packages and supplies certain base libraries, configuration tools, system applications, and configuration files. Unfortunately, differences in their versions, names, and locations make it very difficult to know what will exist on any distribution. This makes it hard to develop binary applications that will work reliably on all Linux distribution bases.

To help overcome this problem, a new project sprang up called the “Linux Standard Base.” It aims to describe a standard base distribution that complying distributions will use. If a developer designs an application to work against the standard base platform, the application will work, and be portable to, any complying Linux distribution.

You can find information on the status of the Linux Standard Base project at its home web site at http://www.linuxbase.org/.

If you're concerned about interoperability, particularly of software from commercial vendors, you should ensure that your Linux distribution is making an effort to participate in the standardization project.

5. About This Book

When Olaf joined the Linux Documentation Project in 1992, he wrote two small chapters on UUCP and smail, which he meant to contribute to the System Administrator's Guide. Development of TCP/IP networking was just beginning, and when those “small chapters” started to grow, he wondered aloud whether it would be nice to have a Networking Guide. “Great!” everyone said. “Go for it!” So he went for it and wrote the first version of the Networking Guide, which was released in September 1993.

Olaf continued work on the Networking Guide and eventually produced a much enhanced version of the guide. Vince Skahan contributed the original sendmail mail chapter, which was completely replaced in this edition because of a new interface to the sendmail configuration.

The version of the guide that you are reading now is a revision and update prompted by O'Reilly & Associates and undertaken by Terry Dawson.[2] Terry has been an amateur radio operator for over 20 years and has worked in the telecommunications industry for over 15 of those. He was co-author of the original NET-FAQ, and has since authored and maintained various networking-related HOWTO documents. Terry has always been an enthusiastic supporter of the Network Administrators Guide project, and added a few new chapters to this version describing features of Linux networking that have been developed since the first edition, plus a bunch of changes to bring the rest of the book up to date.

The exim chapter was contributed by Philip Hazel,[3] who is a lead developer and maintainer of the package.

The book is organized roughly along the sequence of steps you have to take to configure your system for networking. It starts by discussing basic concepts of networks, and TCP/IP-based networks in particular. It then slowly works its way up from configuring TCP/IP at the device level to firewall, accounting, and masquerade configuration, to the setup of common applications such as rlogin and friends, the Network File System, and the Network Information System. This is followed by a chapter on how to set up your machine as a UUCP node. Most of the remaining sections is dedicated to two major applications that run on top of TCP/IP and UUCP: electronic mail and news. A special chapter has been devoted to the IPX protocol and the NCP filesystem, because these are used in many corporate environments where Linux is finding a home.

The email part features an introduction to the more intimate parts of mail transport and routing, and the myriad of addressing schemes you may be confronted with. It describes the configuration and management of exim, a mail transport agent ideal for use in most situations not requiring UUCP, and sendmail, which is for people who have to do more complicated routing involving UUCP.

The news part gives you an overview of how Usenet news works. It covers INN and C News, the two most widely used news transport software packages at the moment, and the use of NNTP to provide newsreading access to a local network. The book closes with a chapter on the care and feeding of the most popular newsreaders on Linux.

Of course, a book can never exhaustively answer all questions you might have. So if you follow the instructions in this book and something still does not work, please be patient. Some of your problems may be due to mistakes on our part (see the section Section 9", later in this Preface), but they also may be caused by changes in the networking software. Therefore, you should check the listed information resources first. There's a good chance that you are not alone with your problems, so a fix or at least a proposed workaround is likely to be known. If you have the opportunity, you should also try to get the latest kernel and network release from one of the Linux FTP sites or a BBS near you. Many problems are caused by software from different stages of development, which fail to work together properly. After all, Linux is a “work in progress.”

6. The Official Printed Version

In Autumn 1993, Andy Oram, who had been around the LDP mailing list from almost the very beginning, asked Olaf about publishing this book at O'Reilly & Associates. He was excited about this book, never having imagined that it would become this successful. He and Andy finally agreed that O'Reilly would produce an enhanced Official Printed Version of the Networking Guide, while Olaf retained the original copyright so that the source of the book could be freely distributed. This means that you can choose freely: you can get the various free forms of the document from your nearest Linux Documentation Project mirror site and print it out, or you can purchase the official printed version from O'Reilly.

Why, then, would you want to pay money for something you can get for free? Is Tim O'Reilly out of his mind for publishing something everyone can print and even sell themselves?[4] Is there any difference between these versions?

The answers are “it depends,” “no, definitely not,” and “yes and no.” O'Reilly & Associates does take a risk in publishing the Networking Guide, and it seems to have paid off for them (they've asked us to do it again). We believe this project serves as a fine example of how the free software world and companies can cooperate to produce something both can benefit from. In our view, the great service O'Reilly is providing to the Linux community (apart from the book becoming readily available in your local bookstore) is that it has helped Linux become recognized as something to be taken seriously: a viable and useful alternative to other commercial operating systems. It's a sad technical bookstore that doesn't have at least one shelf stacked with O'Reilly Linux books.

Why are they publishing it? They see it as their kind of book. It's what they'd hope to produce if they contracted with an author to write about Linux. The pace, level of detail, and style fit in well with their other offerings.

The point of the LDP license is to make sure no one gets shut out. Other people can print out copies of this book, and no one will blame you if you get one of these copies. But if you haven't gotten a chance to see the O'Reilly version, try to get to a bookstore or look at a friend's copy. We think you'll like what you see, and will want to buy it for yourself.

So what about the differences between the printed and online versions? Andy Oram has made great efforts at transforming our ramblings into something actually worth printing. (He has also reviewed a few other books produced by the Linux Documentation Project, contributing whatever professional skills he can to the Linux community.)

Since Andy started reviewing the Networking Guide and editing the copies sent to him, the book has improved vastly from its original form, and with every round of submission and feedback it improves again. The opportunity to take advantage of a professional editor's skill is one not to be wasted. In many ways, Andy's contribution has been as important as that of the authors. The same is also true of the copyeditors, who got the book into the shape you see now. All these edits have been fed back into the online version, so there is no difference in content.

Still, the O'Reilly version will be different. It will be professionally bound, and while you may go to the trouble to print the free version, it is unlikely that you will get the same quality result, and even then it is more unlikely that you'll do it for the price. Secondly, our amateurish attempts at illustration will have been replaced with nicely redone figures by O'Reilly's professional artists. Indexers have generated an improved index, which makes locating information in the book a much simpler process. If this book is something you intend to read from start to finish, you should consider reading the official printed version.

7. Overview

Chapter 1, discusses the history of Linux and covers basic networking information on UUCP, TCP/IP, various protocols, hardware, and security. The next few chapters deal with configuring Linux for TCP/IP networking and running some major applications. We examine IP a little more closely in Chapter 2, before getting our hands dirty with file editing and the like. If you already know how IP routing works and how address resolution is performed, you can skip this chapter.

Chapter 3, deals with very basic configuration issues, such as building a kernel and setting up your Ethernet card. The configuration of your serial ports is covered separately in Chapter 4, because the discussion does not apply to TCP/IP networking only, but is also relevant for UUCP.

Chapter 5, helps you set up your machine for TCP/IP networking. It contains installation hints for standalone hosts with loopback enabled only, and hosts connected to an Ethernet. It also introduces you to a few useful tools you can use to test and debug your setup. Chapter 6, discusses how to configure hostname resolution and explains how to set up a name server.

Chapter 7, explains how to establish SLIP connections and gives a detailed reference for dip, a tool that allows you to automate most of the necessary steps. Chapter 8, covers PPP and pppd, the PPP daemon.

Chapter 9, extends our discussion on network security and describes the Linux TCP/IP firewall and its configuration tools: ipfwadm, ipchains, and iptables. IP firewalling provides a means of controlling who can access your network and hosts very precisely.

Chapter 10, explains how to configure IP Accounting in Linux so you can keep track of how much traffic is going where and who is generating it.

Chapter 11, covers a feature of the Linux networking software called IP masquerade, which allows whole IP networks to connect to and use the Internet through a single IP address, hiding internal systems from outsiders in the process.

Chapter 12, gives a short introduction to setting up some of the most important network applications, such as rlogin, ssh, etc. This chapter also covers how services are managed by the inetd superuser, and how you may restrict certain security-relevant services to a set of trusted hosts.

Chapter 13, and Chapter 14, discuss NIS and NFS. NIS is a tool used to distribute administative information, such as user passwords in a local area network. NFS allows you to share filesystems between several hosts in your network.

In Chapter 15, we discuss the IPX protocol and the NCP filesystem. These allow Linux to be integrated into a Novell NetWare environment, sharing files and printers with non-Linux machines.

Chapter 16, gives you an extensive introduction to the administration of Taylor UUCP, a free implementation of the UUCP suite.

The remainder of the book is taken up by a detailed tour of electronic mail and Usenet news. Chapter 17, introduces you to the central concepts of electronic mail, like what a mail address looks like, and how the mail handling system manages to get your message to the recipient.

Chapter 18, and Chapter 19, cover the configuration of sendmail and exim, two mail transport agents you can use for Linux. This book explains both of them, because exim is easier to install for the beginner, while sendmail provides support for UUCP.

Chapter 20, through Chapter 23, explain the way news is managed in Usenet and how you install and use C News, nntpd, and INN: three popular software packages for managing Usenet news. After the brief introduction in Chapter 20, you can read Chapter 21, if you want to transfer news using C News, a traditional service generally used with UUCP. The following chapters discuss more modern alternatives to C News that use the Internet-based protocol NNTP (Network News Transfer Protocol). Chapter 22 covers how to set up a simple NNTP daemon, nntpd, to provide news reading access for a local network, while Chapter 23 describes a more robust server for more extensive NetNews transfers, the InterNet News daemon (INN). And finally, Chapter 24, shows you how to configure and maintain various newsreaders.

8. Conventions Used in This Book

All examples presented in this book assume you are using a sh compatible shell. The bash shell is sh compatible and is the standard shell of all Linux distributions. If you happen to be a csh user, you will have to make appropriate adjustments.

The following is a list of the typographical conventions used in this book:

- Italic

Used for file and directory names, program and command names, command-line options, email addresses and pathnames, URLs, and for emphasizing new terms.

- Boldface

Used for machine names, hostnames, site names, usernames and IDs, and for occasional emphasis.

- Constant Width

Used in examples to show the contents of code files or the output from commands and to indicate environment variables and keywords that appear in code.

- Constant Width Italic

Used to indicate variable options, keywords, or text that the user is to replace with an actual value.

- Constant Width Bold

Used in examples to show commands or other text that should be typed literally by the user.

| Text appearing in this manner offers a warning. You can make a mistake here that hurts your system or is hard to recover from. |

9. Submitting Changes

We have tested and verified the information in this book to the best of our ability, but you may find that features have changed (or even that we have made mistakes!). Please let us know about any errors you find, as well as your suggestions for future editions, by writing to:

O'Reilly & Associates, Inc.

101 Morris Street

Sebastopol, CA 95472

1-800-998-9938 (in the U.S. or Canada)

1-707-829-0515 (international or local)

1-707-829-0104 (FAX)

You can send us messages electronically. To be put on the mailing list or request a catalog, send email to:

info@oreilly.com

To ask technical questions or comment on the book, send email to:

bookquestions@oreilly.com

We have a web site for the book, where we'll list examples, errata, and any plans for future editions. You can access this page at:

http://www.oreilly.com/catalog/linag2

For more information about this book and others, see the O'Reilly web site:

http://www.oreilly.com

10. Acknowledgments

This edition of the Networking Guide owes almost everything to the outstanding work of Olaf and Vince. It is difficult to appreciate the effort that goes into researching and writing a book of this nature until you've had a chance to work on one yourself. Updating the book was a challenging task, but with an excellent base to work from, it was an enjoyable one.

This book owes very much to the numerous people who took the time to proof-read it and help iron out many mistakes, both technical and grammatical (never knew that there was such a thing as a dangling participle). Phil Hughes, John Macdonald, and Erik Ratcliffe all provided very helpful (and on the whole, quite consistent) feedback on the content of the book.

We also owe many thanks to the people at O'Reilly we've had the pleasure to work with: Sarah Jane Shangraw, who got the book into the shape you can see now; Maureen Dempsey, who copyedited the text; Rob Romano, Rhon Porter, and Chris Reilley, who created all the figures; Hanna Dyer, who designed the cover; Alicia Cech, David Futato, and Jennifer Niedherst for the internal layout; Lars Kaufman for suggesting old woodcuts as a visual theme; Judy Hoer for the index; and finally, Tim O'Reilly for the courage to take up such a project.

We are greatly indebted to Andres Sep�lveda, Wolfgang Michaelis, Michael K. Johnson, and all developers who spared the time to check the information provided in the Networking Guide. Phil Hughes, John MacDonald, and Eric Ratcliffe contributed invaluable comments on the second edition. We also wish to thank all those who read the first version of the Networking Guide and sent corrections and suggestions. You can find a hopefully complete list of contributors in the file Thanks in the online distribution. Finally, this book would not have been possible without the support of Holger Grothe, who provided Olaf with the Internet connectivity he needed to make the original version happen.

Olaf would also like to thank the following groups and companies that printed the first edition of the Networking Guide and have donated money either to him or to the Linux Documentation Project as a whole: Linux Support Team, Erlangen, Germany; S.u.S.E. GmbH, Fuerth, Germany; and Linux System Labs, Inc., Clinton Twp., United States, RedHat Software, North Carolina, United States.

Terry thanks his wife, Maggie, who patiently supported him throughout his participation in the project despite the challenges presented by the birth of their first child, Jack. Additionally, he thanks the many people of the Linux community who either nurtured or suffered him to the point at which he could actually take part and actively contribute. “I'll help you if you promise to help someone else in return.”

10.1. The Hall of Fame

Besides those we have already mentioned, a large number of people have contributed to the Networking Guide, by reviewing it and sending us corrections and suggestions. We are very grateful.

Here is a list of those whose contributions left a trace in our mail folders.

Al Longyear, Alan Cox, Andres Sep�lveda, Ben Cooper, Cameron Spitzer, Colin McCormack, D.J. Roberts, Emilio Lopes, Fred N. van Kempen, Gert Doering, Greg Hankins, Heiko Eissfeldt, J.P. Szikora, Johannes Stille, Karl Eichwalder, Les Johnson, Ludger Kunz, Marc van Diest, Michael K. Johnson, Michael Nebel, Michael Wing, Mitch D'Souza, Paul Gortmaker, Peter Brouwer, Peter Eriksson, Phil Hughes, Raul Deluth Miller, Rich Braun, Rick Sladkey, Ronald Aarts, Swen Th�emmler, Terry Dawson, Thomas Quinot, and Yury Shevchuk.

Chapter 1. Introduction to Networking

1.1. History

The idea of networking is probably as old as telecommunications itself. Consider people living in the Stone Age, when drums may have been used to transmit messages between individuals. Suppose caveman A wants to invite caveman B over for a game of hurling rocks at each other, but they live too far apart for B to hear A banging his drum. What are A's options? He could 1) walk over to B's place, 2) get a bigger drum, or 3) ask C, who lives halfway between them, to forward the message. The last option is called networking.

Of course, we have come a long way from the primitive pursuits and devices of our forebears. Nowadays, we have computers talk to each other over vast assemblages of wires, fiber optics, microwaves, and the like, to make an appointment for Saturday's soccer match.[5] In the following description, we will deal with the means and ways by which this is accomplished, but leave out the wires, as well as the soccer part.

We will describe three types of networks in this guide. We will focus on TCP/IP most heavily because it is the most popular protocol suite in use on both Local Area Networks (LANs) and Wide Area Networks (WANs), such as the Internet. We will also take a look at UUCP and IPX. UUCP was once commonly used to transport news and mail messages over dialup telephone connections. It is less common today, but is still useful in a variety of situations. The IPX protocol is used most commonly in the Novell NetWare environment and we'll describe how to use it to connect your Linux machine into a Novell network. Each of these protocols are networking protocols and are used to carry data between host computers. We'll discuss how they are used and introduce you to their underlying principles.

We define a network as a collection of hosts that are able to communicate with each other, often by relying on the services of a number of dedicated hosts that relay data between the participants. Hosts are often computers, but need not be; one can also think of X terminals or intelligent printers as hosts. Small agglomerations of hosts are also called sites.

Communication is impossible without some sort of language or code. In computer networks, these languages are collectively referred to as protocols. However, you shouldn't think of written protocols here, but rather of the highly formalized code of behavior observed when heads of state meet, for instance. In a very similar fashion, the protocols used in computer networks are nothing but very strict rules for the exchange of messages between two or more hosts.

1.2. TCP/IP Networks

Modern networking applications require a sophisticated approach to carrying data from one machine to another. If you are managing a Linux machine that has many users, each of whom may wish to simultaneously connect to remote hosts on a network, you need a way of allowing them to share your network connection without interfering with each other. The approach that a large number of modern networking protocols uses is called packet-switching. A packet is a small chunk of data that is transferred from one machine to another across the network. The switching occurs as the datagram is carried across each link in the network. A packet-switched network shares a single network link among many users by alternately sending packets from one user to another across that link.

The solution that Unix systems, and subsequently many non-Unix systems, have adopted is known as TCP/IP. When talking about TCP/IP networks you will hear the term datagram, which technically has a special meaning but is often used interchangeably with packet. In this section, we will have a look at underlying concepts of the TCP/IP protocols.

1.2.1. Introduction to TCP/IP Networks

TCP/IP traces its origins to a research project funded by the United States Defense Advanced Research Projects Agency (DARPA) in 1969. The ARPANET was an experimental network that was converted into an operational one in 1975 after it had proven to be a success.

In 1983, the new protocol suite TCP/IP was adopted as a standard, and all hosts on the network were required to use it. When ARPANET finally grew into the Internet (with ARPANET itself passing out of existence in 1990), the use of TCP/IP had spread to networks beyond the Internet itself. Many companies have now built corporate TCP/IP networks, and the Internet has grown to a point at which it could almost be considered a mainstream consumer technology. It is difficult to read a newspaper or magazine now without seeing reference to the Internet; almost everyone can now use it.

For something concrete to look at as we discuss TCP/IP throughout the following sections, we will consider Groucho Marx University (GMU), situated somewhere in Fredland, as an example. Most departments run their own Local Area Networks, while some share one and others run several of them. They are all interconnected and hooked to the Internet through a single high-speed link.

Suppose your Linux box is connected to a LAN of Unix hosts at the Mathematics department, and its name is erdos. To access a host at the Physics department, say quark, you enter the following command:

$ rlogin quark.physics Welcome to the Physics Department at GMU (ttyq2) login: |

At the prompt, you enter your login name, say andres, and your password. You are then given a shell[6] on quark, to which you can type as if you were sitting at the system's console. After you exit the shell, you are returned to your own machine's prompt. You have just used one of the instantaneous, interactive applications that TCP/IP provides: remote login.

While being logged into quark, you might also want to run a graphical user interface application, like a word processing program, a graphics drawing program, or even a World Wide Web browser. The X windows system is a fully network-aware graphical user environment, and it is available for many different computing systems. To tell this application that you want to have its windows displayed on your host's screen, you have to set the DISPLAY environment variable:

$ DISPLAY=erdos.maths:0.0 $ export DISPLAY |

If you now start your application, it will contact your X server instead of quark's, and display all its windows on your screen. Of course, this requires that you have X11 runnning on erdos. The point here is that TCP/IP allows quark and erdos to send X11 packets back and forth to give you the illusion that you're on a single system. The network is almost transparent here.

Another very important application in TCP/IP networks is NFS, which stands for Network File System. It is another form of making the network transparent, because it basically allows you to treat directory hierarchies from other hosts as if they were local file systems and look like any other directories on your host. For example, all users' home directories can be kept on a central server machine from which all other hosts on the LAN mount them. The effect is that users can log in to any machine and find themselves in the same home directory. Similarly, it is possible to share large amounts of data (such as a database, documentation or application programs) among many hosts by maintaining one copy of the data on a server and allowing other hosts to access it. We will come back to NFS in Chapter 14.

Of course, these are only examples of what you can do with TCP/IP networks. The possibilities are almost limitless, and we'll introduce you to more as you read on through the book.

We will now have a closer look at the way TCP/IP works. This information will help you understand how and why you have to configure your machine. We will start by examining the hardware, and slowly work our way up.

1.2.2. Ethernets

The most common type of LAN hardware is known as Ethernet. In its simplest form, it consists of a single cable with hosts attached to it through connectors, taps, or transceivers. Simple Ethernets are relatively inexpensive to install, which together with a net transfer rate of 10, 100, or even 1,000 Megabits per second, accounts for much of its popularity.

Ethernets come in three flavors: thick, thin, and twisted pair. Thin and thick Ethernet each use a coaxial cable, differing in diameter and the way you may attach a host to this cable. Thin Ethernet uses a T-shaped “BNC” connector, which you insert into the cable and twist onto a plug on the back of your computer. Thick Ethernet requires that you drill a small hole into the cable, and attach a transceiver using a “vampire tap.” One or more hosts can then be connected to the transceiver. Thin and thick Ethernet cable can run for a maximum of 200 and 500 meters respectively, and are also called 10base-2 and 10base-5. The “base” refers to “baseband modulation” and simply means that the data is directly fed onto the cable without any modem. The number at the start refers to the speed in Megabits per second, and the number at the end is the maximum length of the cable in hundreds of metres. Twisted pair uses a cable made of two pairs of copper wires and usually requires additional hardware known as active hubs. Twisted pair is also known as 10base-T, the “T” meaning twisted pair. The 100 Megabits per second version is known as 100base-T.

To add a host to a thin Ethernet installation, you have to disrupt network service for at least a few minutes because you have to cut the cable to insert the connector. Although adding a host to a thick Ethernet system is a little complicated, it does not typically bring down the network. Twisted pair Ethernet is even simpler. It uses a device called a “hub,” which serves as an interconnection point. You can insert and remove hosts from a hub without interrupting any other users at all.

Many people prefer thin Ethernet for small networks because it is very inexpensive; PC cards come for as little as US $30 (many companies are literally throwing them out now), and cable is in the range of a few cents per meter. However, for large-scale installations, either thick Ethernet or twisted pair is more appropriate. For example, the Ethernet at GMU's Mathematics Department originally chose thick Ethernet because it is a long route that the cable must take so traffic will not be disrupted each time a host is added to the network. Twisted pair installations are now very common in a variety of installations. The Hub hardware is dropping in price and small units are now available at a price that is attractive to even small domestic networks. Twisted pair cabling can be significantly cheaper for large installations, and the cable itself is much more flexible than the coaxial cables used for the other Ethernet systems. The network administrators in GMU's mathematics department are planning to replace the existing network with a twisted pair network in the coming finanical year because it will bring them up to date with current technology and will save them significant time when installing new host computers and moving existing computers around.

One of the drawbacks of Ethernet technology is its limited cable length, which precludes any use of it other than for LANs. However, several Ethernet segments can be linked to one another using repeaters, bridges, or routers. Repeaters simply copy the signals between two or more segments so that all segments together will act as if they are one Ethernet. Due to timing requirements, there may not be more than four repeaters between any two hosts on the network. Bridges and routers are more sophisticated. They analyze incoming data and forward it only when the recipient host is not on the local Ethernet.

Ethernet works like a bus system, where a host may send packets (or frames) of up to 1,500 bytes to another host on the same Ethernet. A host is addressed by a six-byte address hardcoded into the firmware of its Ethernet network interface card (NIC). These addresses are usually written as a sequence of two-digit hex numbers separated by colons, as in aa:bb:cc:dd:ee:ff.

A frame sent by one station is seen by all attached stations, but only the destination host actually picks it up and processes it. If two stations try to send at the same time, a collision occurs. Collisions on an Ethernet are detected very quickly by the electronics of the interface cards and are resolved by the two stations aborting the send, each waiting a random interval and re-attempting the transmission. You'll hear lots of stories about collisions on Ethernet being a problem and that utilization of Ethernets is only about 30 percent of the available bandwidth because of them. Collisions on Ethernet are a normal phenomenon, and on a very busy Ethernet network you shouldn't be surprised to see collision rates of up to about 30 percent. Utilization of Ethernet networks is more realistically limited to about 60 percent before you need to start worrying about it.[7]

1.2.3. Other Types of Hardware

In larger installations, such as Groucho Marx University, Ethernet is usually not the only type of equipment used. There are many other data communications protocols available and in use. All of the protocols listed are supported by Linux, but due to space constraints we'll describe them briefly. Many of the protocols have HOWTO documents that describe them in detail, so you should refer to those if you're interested in exploring those that we don't describe in this book.

At Groucho Marx University, each department's LAN is linked to the campus high-speed “backbone” network, which is a fiber optic cable running a network technology called Fiber Distributed Data Interface (FDDI). FDDI uses an entirely different approach to transmitting data, which basically involves sending around a number of tokens, with a station being allowed to send a frame only if it captures a token. The main advantage of a token-passing protocol is a reduction in collisions. Therefore, the protocol can more easily attain the full speed of the transmission medium, up to 100 Mbps in the case of FDDI. FDDI, being based on optical fiber, offers a significant advantage because its maximum cable length is much greater than wire-based technologies. It has limits of up to around 200 km, which makes it ideal for linking many buildings in a city, or as in GMU's case, many buildings on a campus.

Similarly, if there is any IBM computing equipment around, an IBM Token Ring network is quite likely to be installed. Token Ring is used as an alternative to Ethernet in some LAN environments, and offers the same sorts of advantages as FDDI in terms of achieving full wire speed, but at lower speeds (4 Mbps or 16 Mbps), and lower cost because it is based on wire rather than fiber. In Linux, Token Ring networking is configured in almost precisely the same way as Ethernet, so we don't cover it specifically.

Although it is much less likely today than in the past, other LAN technologies, such as ArcNet and DECNet, might be installed. Linux supports these too, but we don't cover them here.

Many national networks operated by Telecommunications companies support packet switching protocols. Probably the most popular of these is a standard named X.25. Many Public Data Networks, like Tymnet in the U.S., Austpac in Australia, and Datex-P in Germany offer this service. X.25 defines a set of networking protocols that describes how data terminal equipment, such as a host, communicates with data communications equipment (an X.25 switch). X.25 requires a synchronous data link, and therefore special synchronous serial port hardware. It is possible to use X.25 with normal serial ports if you use a special device called a PAD (Packet Assembler Disassembler). The PAD is a standalone device that provides asynchronous serial ports and a synchronous serial port. It manages the X.25 protocol so that simple terminal devices can make and accept X.25 connections. X.25 is often used to carry other network protocols, such as TCP/IP. Since IP datagrams cannot simply be mapped onto X.25 (or vice versa), they are encapsulated in X.25 packets and sent over the network. There is an experimental implementation of the X.25 protocol available for Linux.

A more recent protocol commonly offered by telecommunications companies is called Frame Relay. The Frame Relay protocol shares a number of technical features with the X.25 protocol, but is much more like the IP protocol in behavior. Like X.25, Frame Relay requires special synchronous serial hardware. Because of their similarities, many cards support both of these protocols. An alternative is available that requires no special internal hardware, again relying on an external device called a Frame Relay Access Device (FRAD) to manage the encapsulation of Ethernet packets into Frame Relay packets for transmission across a network. Frame Relay is ideal for carrying TCP/IP between sites. Linux provides drivers that support some types of internal Frame Relay devices.

If you need higher speed networking that can carry many different types of data, such as digitized voice and video, alongside your usual data, ATM (Asynchronous Transfer Mode) is probably what you'll be interested in. ATM is a new network technology that has been specifically designed to provide a manageable, high-speed, low-latency means of carrying data, and provide control over the Quality of Service (Q.S.). Many telecommunications companies are deploying ATM network infrastructure because it allows the convergence of a number of different network services into one platform, in the hope of achieving savings in management and support costs. ATM is often used to carry TCP/IP. The Networking-HOWTO offers information on the Linux support available for ATM.

Frequently, radio amateurs use their radio equipment to network their computers; this is commonly called packet radio. One of the protocols used by amateur radio operators is called AX.25 and is loosely derived from X.25. Amateur radio operators use the AX.25 protocol to carry TCP/IP and other protocols, too. AX.25, like X.25, requires serial hardware capable of synchronous operation, or an external device called a “Terminal Node Controller” to convert packets transmitted via an asynchronous serial link into packets transmitted synchronously. There are a variety of different sorts of interface cards available to support packet radio operation; these cards are generally referred to as being “Z8530 SCC based,” and are named after the most popular type of communications controller used in the designs. Two of the other protocols that are commonly carried by AX.25 are the NetRom and Rose protocols, which are network layer protocols. Since these protocols run over AX.25, they have the same hardware requirements. Linux supports a fully featured implementation of the AX.25, NetRom, and Rose protocols. The AX25-HOWTO is a good source of information on the Linux implementation of these protocols.

Other types of Internet access involve dialing up a central system over slow but cheap serial lines (telephone, ISDN, and so on). These require yet another protocol for transmission of packets, such as SLIP or PPP, which will be described later.

1.2.4. The Internet Protocol

Of course, you wouldn't want your networking to be limited to one Ethernet or one point-to-point data link. Ideally, you would want to be able to communicate with a host computer regardless of what type of physical network it is connected to. For example, in larger installations such as Groucho Marx University, you usually have a number of separate networks that have to be connected in some way. At GMU, the Math department runs two Ethernets: one with fast machines for professors and graduates, and another with slow machines for students. Both are linked to the FDDI campus backbone network.

This connection is handled by a dedicated host called a gateway that handles incoming and outgoing packets by copying them between the two Ethernets and the FDDI fiber optic cable. For example, if you are at the Math department and want to access quark on the Physics department's LAN from your Linux box, the networking software will not send packets to quark directly because it is not on the same Ethernet. Therefore, it has to rely on the gateway to act as a forwarder. The gateway (named sophus) then forwards these packets to its peer gateway niels at the Physics department, using the backbone network, with niels delivering it to the destination machine. Data flow between erdos and quark is shown in Figure 1-1.

This scheme of directing data to a remote host is called routing, and packets are often referred to as datagrams in this context. To facilitate things, datagram exchange is governed by a single protocol that is independent of the hardware used: IP, or Internet Protocol. In Chapter 2, we will cover IP and the issues of routing in greater detail.

The main benefit of IP is that it turns physically dissimilar networks into one apparently homogeneous network. This is called internetworking, and the resulting “meta-network” is called an internet. Note the subtle difference here between an internet and the Internet. The latter is the official name of one particular global internet.

Of course, IP also requires a hardware-independent addressing scheme. This is achieved by assigning each host a unique 32-bit number called the IP address. An IP address is usually written as four decimal numbers, one for each 8-bit portion, separated by dots. For example, quark might have an IP address of 0x954C0C04, which would be written as 149.76.12.4. This format is also called dotted decimal notation and sometimes dotted quad notation. It is increasingly going under the name IPv4 (for Internet Protocol, Version 4) because a new standard called IPv6 offers much more flexible addressing, as well as other modern features. It will be at least a year after the release of this edition before IPv6 is in use.

You will notice that we now have three different types of addresses: first there is the host's name, like quark, then there are IP addresses, and finally, there are hardware addresses, like the 6-byte Ethernet address. All these addresses somehow have to match so that when you type rlogin quark, the networking software can be given quark's IP address; and when IP delivers any data to the Physics department's Ethernet, it somehow has to find out what Ethernet address corresponds to the IP address.

We will deal with these situations in Chapter 2. For now, it's enough to remember that these steps of finding addresses are called hostname resolution, for mapping hostnames onto IP addresses, and address resolution, for mapping the latter to hardware addresses.

1.2.5. IP Over Serial Lines

On serial lines, a “de facto” standard exists known as SLIP, or Serial Line IP. A modification of SLIP known as CSLIP, or Compressed SLIP, performs compression of IP headers to make better use of the relatively low bandwidth provided by most serial links. Another serial protocol is PPP, or the Point-to-Point Protocol. PPP is more modern than SLIP and includes a number of features that make it more attractive. Its main advantage over SLIP is that it isn't limited to transporting IP datagrams, but is designed to allow just about any protocol to be carried across it.

1.2.6. The Transmission Control Protocol

Sending datagrams from one host to another is not the whole story. If you log in to quark, you want to have a reliable connection between your rlogin process on erdos and the shell process on quark. Thus, the information sent to and fro must be split up into packets by the sender and reassembled into a character stream by the receiver. Trivial as it seems, this involves a number of complicated tasks.

A very important thing to know about IP is that, by intent, it is not reliable. Assume that ten people on your Ethernet started downloading the latest release of Netscape's web browser source code from GMU's FTP server. The amount of traffic generated might be too much for the gateway to handle, because it's too slow and it's tight on memory. Now if you happen to send a packet to quark, sophus might be out of buffer space for a moment and therefore unable to forward it. IP solves this problem by simply discarding it. The packet is irrevocably lost. It is therefore the responsibility of the communicating hosts to check the integrity and completeness of the data and retransmit it in case of error.

This process is performed by yet another protocol, Transmission Control Protocol (TCP), which builds a reliable service on top of IP. The essential property of TCP is that it uses IP to give you the illusion of a simple connection between the two processes on your host and the remote machine, so you don't have to care about how and along which route your data actually travels. A TCP connection works essentially like a two-way pipe that both processes may write to and read from. Think of it as a telephone conversation.

TCP identifies the end points of such a connection by the IP addresses of the two hosts involved and the number of a port on each host. Ports may be viewed as attachment points for network connections. If we are to strain the telephone example a little more, and you imagine that cities are like hosts, one might compare IP addresses to area codes (where numbers map to cities), and port numbers to local codes (where numbers map to individual people's telephones). An individual host may support many different services, each distinguished by its own port number.

In the rlogin example, the client application (rlogin) opens a port on erdos and connects to port 513 on quark, to which the rlogind server is known to listen. This action establishes a TCP connection. Using this connection, rlogind performs the authorization procedure and then spawns the shell. The shell's standard input and output are redirected to the TCP connection, so that anything you type to rlogin on your machine will be passed through the TCP stream and be given to the shell as standard input.

1.2.7. The User Datagram Protocol

Of course, TCP isn't the only user protocol in TCP/IP networking. Although suitable for applications like rlogin, the overhead involved is prohibitive for applications like NFS, which instead uses a sibling protocol of TCP called UDP, or User Datagram Protocol. Just like TCP, UDP allows an application to contact a service on a certain port of the remote machine, but it doesn't establish a connection for this. Instead, you use it to send single packets to the destination service—hence its name.

Assume you want to request a small amount of data from a database server. It takes at least three datagrams to establish a TCP connection, another three to send and confirm a small amount of data each way, and another three to close the connection. UDP provides us with a means of using only two datagrams to achieve almost the same result. UDP is said to be connectionless, and it doesn't require us to establish and close a session. We simply put our data into a datagram and send it to the server; the server formulates its reply, puts the data into a datagram addressed back to us, and transmits it back. While this is both faster and more efficient than TCP for simple transactions, UDP was not designed to deal with datagram loss. It is up to the application, a name server for example, to take care of this.

1.2.8. More on Ports

Ports may be viewed as attachment points for network connections. If an application wants to offer a certain service, it attaches itself to a port and waits for clients (this is also called listening on the port). A client who wants to use this service allocates a port on its local host and connects to the server's port on the remote host. The same port may be open on many different machines, but on each machine only one process can open a port at any one time.

An important property of ports is that once a connection has been established between the client and the server, another copy of the server may attach to the server port and listen for more clients. This property permits, for instance, several concurrent remote logins to the same host, all using the same port 513. TCP is able to tell these connections from one another because they all come from different ports or hosts. For example, if you log in twice to quark from erdos, the first rlogin client will use the local port 1023, and the second one will use port 1022. Both, however, will connect to the same port 513 on quark. The two connections will be distinguished by use of the port numbers used at erdos.

This example shows the use of ports as rendezvous points, where a client contacts a specific port to obtain a specific service. In order for a client to know the proper port number, an agreement has to be reached between the administrators of both systems on the assignment of these numbers. For services that are widely used, such as rlogin, these numbers have to be administered centrally. This is done by the IETF (Internet Engineering Task Force), which regularly releases an RFC titled Assigned Numbers (RFC-1700). It describes, among other things, the port numbers assigned to well-known services. Linux uses a file called /etc/services that maps service names to numbers.

It is worth noting that although both TCP and UDP connections rely on ports, these numbers do not conflict. This means that TCP port 513, for example, is different from UDP port 513. In fact, these ports serve as access points for two different services, namely rlogin (TCP) and rwho (UDP).

1.2.9. The Socket Library

In Unix operating systems, the software performing all the tasks and

protocols described above is usually part of the kernel, and so it is

in Linux. The programming interface most common in the Unix world is

the Berkeley Socket Library. Its name derives

from a popular analogy that views ports as sockets and connecting to

a port as plugging in. It provides the bind call

to specify a remote host, a transport protocol, and a service that a

program can connect or listen to (using connect,

listen, and accept). The

socket library is somewhat more general in that it provides not only a

class of TCP/IP-based sockets (the AF_INET

sockets), but also a class that handles connections local to the

machine (the AF_UNIX class). Some implementations

can also handle other classes, like the XNS (Xerox

Networking System) protocol or X.25.

In Linux, the socket library is part of the standard

libc C library. It supports the

AF_INET and AF_INET6 sockets

for TCP/IP and AF_UNIX for Unix domain

sockets. It also supports AF_IPX for Novell's

network protocols, AF_X25 for the X.25 network

protocol, AF_ATMPVC and

AF_ATMSVC for the ATM network protocol and

AF_AX25, AF_NETROM, and

AF_ROSE sockets for Amateur Radio protocol

support. Other protocol families are being developed and will be

added in time.

1.3. UUCP Networks

Unix-to-Unix Copy (UUCP) started out as a package of programs that transferred files over serial lines, scheduled those transfers, and initiated execution of programs on remote sites. It has undergone major changes since its first implementation in the late seventies, but it is still rather spartan in the services it offers. Its main application is still in Wide Area Networks, based on periodic dialup telephone links.

UUCP was first developed by Bell Laboratories in 1977 for communication between their Unix development sites. In mid-1978, this network already connected over 80 sites. It was running email as an application, as well as remote printing. However, the system's central use was in distributing new software and bug fixes. Today, UUCP is not confined solely to the Unix environment. There are free and commercial ports available for a variety of platforms, including AmigaOS, DOS, and Atari's TOS.

One of the main disadvantages of UUCP networks is that they operate in batches. Rather than having a permanent connection established between hosts, it uses temporary connections. A UUCP host machine might dial in to another UUCP host only once a day, and then only for a short period of time. While it is connected, it will transfer all of the news, email, and files that have been queued, and then disconnect. It is this queuing that limits the sorts of applications that UUCP can be applied to. In the case of email, a user may prepare an email message and post it. The message will stay queued on the UUCP host machine until it dials in to another UUCP host to transfer the message. This is fine for network services such as email, but is no use at all for services such as rlogin.

Despite these limitations, there are still many UUCP networks operating all over the world, run mainly by hobbyists, which offer private users network access at reasonable prices. The main reason for the longtime popularity of UUCP was that it was very cheap compared to having your computer directly connected to the Internet. To make your computer a UUCP node, all you needed was a modem, a working UUCP implementation, and another UUCP node that was willing to feed you mail and news. Many people were prepared to provide UUCP feeds to individuals because such connections didn't place much demand on their existing network.

We cover the configuration of UUCP in a chapter of its own later in the book, but we won't focus on it too heavily, as it's being replaced rapidly with TCP/IP, now that cheap Internet access has become commonly available in most parts of the world.

1.4. Linux Networking